Tool Evaluation

QUICK TEST - 'Superpowers' for Agentic Coding: A Practical Evaluation

Superpowers is a “skills”-based prompt framework for agentic coding. We tested it on a physics problem and found it helpful for structuring planning, debugging, and exploration.

I recently ran a small test of the so-called “Superpowers” workflow and here is a short report on it. This write-up is informal but may be useful for others exploring similar tooling. The workflow tested here refers to the open-source repository: https://github.com/obra/superpowers

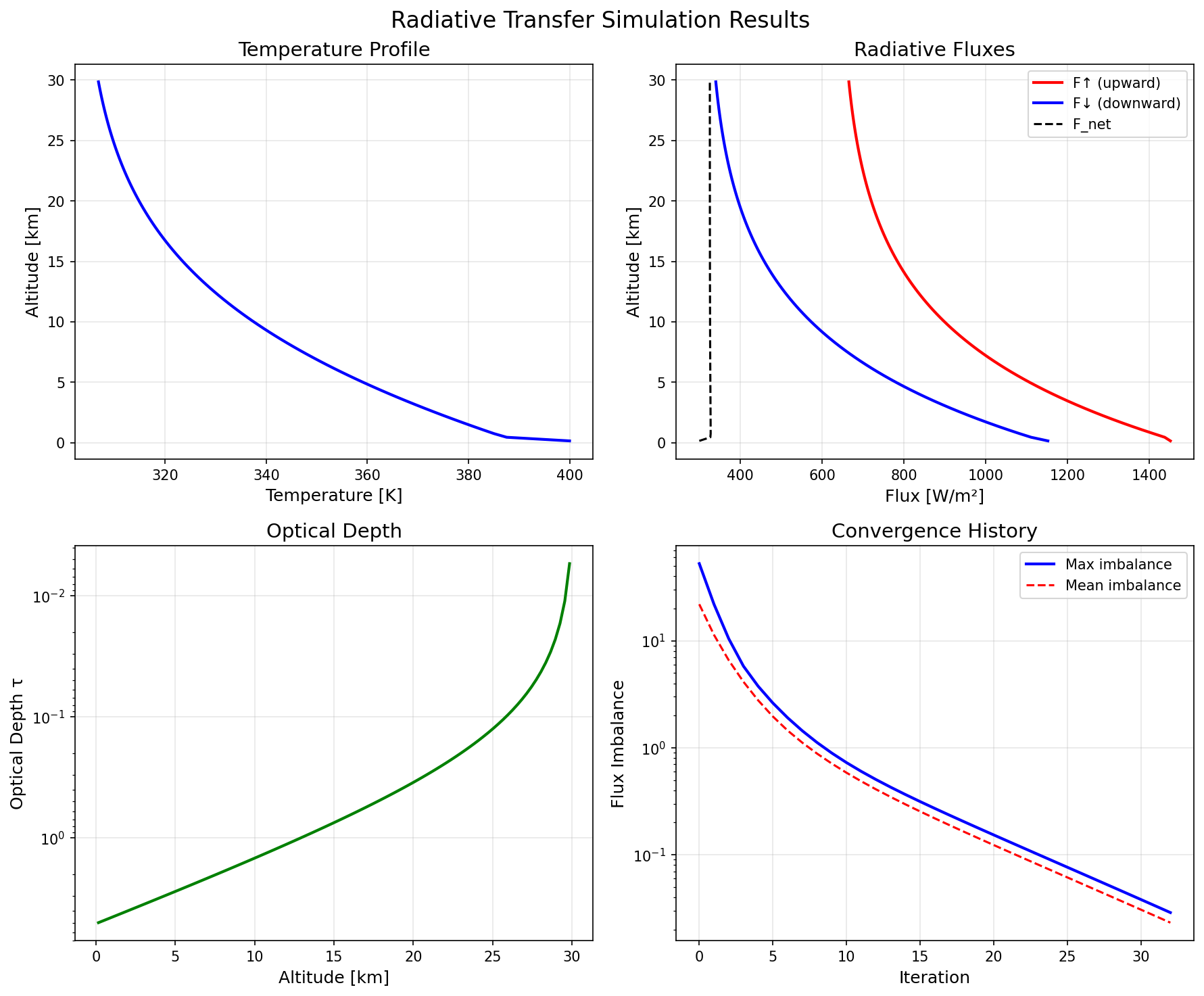

The test case was a radiative transfer problem: modeling a planetary atmosphere and producing corresponding visualizations. In simple terms, this involves modeling how radiation propagates through a medium while being absorbed and emitted, and is commonly solved using numerical methods. This provided a sufficiently technical and structured environment to evaluate how the workflow performs under non-trivial conditions.

It’s worth clarifying upfront that “Superpowers” is not software in the traditional sense. Rather, it is a collection of structured “skills.” Skills are essentially prompt scaffolding designed to, for example, guide agentic coding workflows. In this case, I tested it within Claude Code.

This distinction matters because it makes evaluation less clean: it is difficult to isolate how much of the outcome is attributable to the “Superpowers” layer versus the underlying capabilities of Claude Code itself. At the same time, the interaction is not entirely opaque. It was possible to observe that the “Superpowers” layer was actively engaged. I also explicitly invoked some of the skills during later stages of the workflow, most notably during debugging and iterative refinement. This suggests that, while not operating as a standalone system, the framework does have a detectable and functional role within the overall process.

First Impressions

The most noticeable impact is on the planning and debugging phases. The workflow encourages more explicit structuring before implementation begins, which improves clarity and is likely beneficial for users who prefer a more systematic approach.

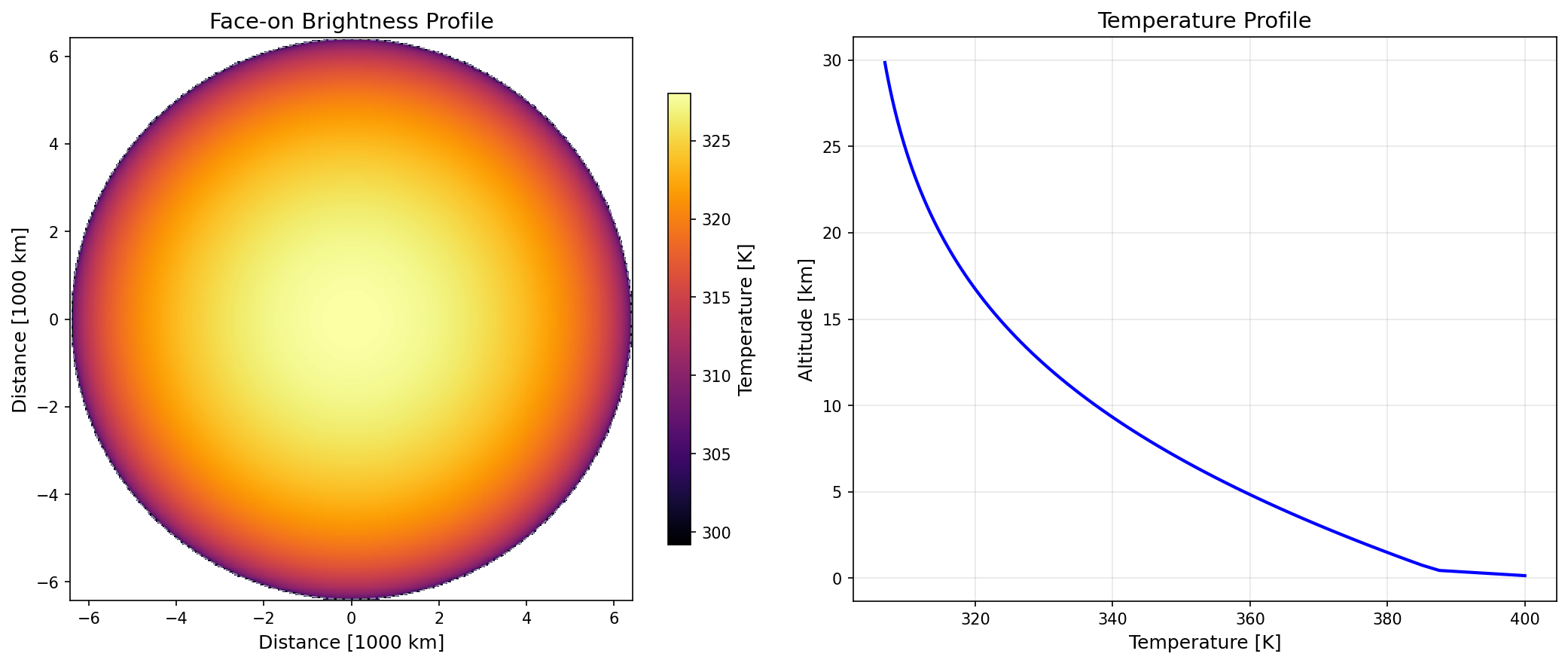

One practical benefit was in using the planning and brainstorming stages after the main code was working to explore alternative visualizations. I was able to prompt the system to generate more “flashy” or presentation-oriented outputs rather than strictly scientific plots. In particular, it produced a small web-based interface showcasing different rendering styles, which made it easier to compare options and decide how to present the results.

At a higher level, this approach is not fundamentally new. Variants of “skill-based” or prompt-layer steering for agentic systems have existed for some time. What “Superpowers” offers is a relatively well-executed and packaged version of this idea.

Token Usage and Efficiency

One of my initial concerns was that the added structure would significantly increase token usage. This turned out to be less of an issue than expected.

While the workflow introduces more verbosity upfront, this appears to reduce ambiguity and limit unnecessary iterations. In this test, overall token usage was comparable to, and possibly lower than, a less structured approach.

During debugging, however, token usage can increase due to the more systematic approach the workflow encourages. This adds some cost, but also makes it easier to identify issues. In this case, it helped surface not only implementation bugs but also physics-related errors, which made the additional usage worthwhile.

Performance on a Technical Task

On the task itself, the system was able to generate a working radiative calculation and produce meaningful visualizations. By a narrow definition, this constitutes a successful outcome.

However, this success is conditional.

The process still relies heavily on domain expertise. Identifying a key issue required recognizing that the physical behavior of the result was clearly incorrect, which the system did not flag on its own. While it was effective at helping diagnose and fix the issue once pointed out, it did not independently question the validity of the result.

This highlights a core limitation: qualitative correctness remains difficult to assess automatically. A non-expert user would likely not notice such issues, even if the code executes without errors.

While doing this, I let the agent do the work and used my own judgment. The result is not something I would trust for scientific use on its own. It is suitable for demonstrations or exploratory work, but not for physics applications without thorough validation, independent testing, and careful code review.

Where the Value Actually Lies

The primary value of this approach is in systematic structure.

By making planning and execution stages more explicit, the workflow improves transparency and coherence. The development process becomes easier to follow and reason about, even if the level of required user involvement remains largely unchanged.

In other words, it does not eliminate the need for expertise, but it supports more effective interaction between the expert and the coding system.

Final Takeaway

“Superpowers” (https://github.com/obra/superpowers) is best understood as a solid implementation of skills. It integrates well with tools like Claude Code and offers tangible improvements, particularly in planning and workflow clarity. It is a useful addition to the toolkit, and one I would be happy to use again.